This taxonomy is written for technical buyers, architects, and policymakers evaluating agent infrastructure. The relative maturity of each layer is shifting quickly; the framework is intended to be durable, but the balance of where real safety lives today versus where it will live in two years is itself in motion.

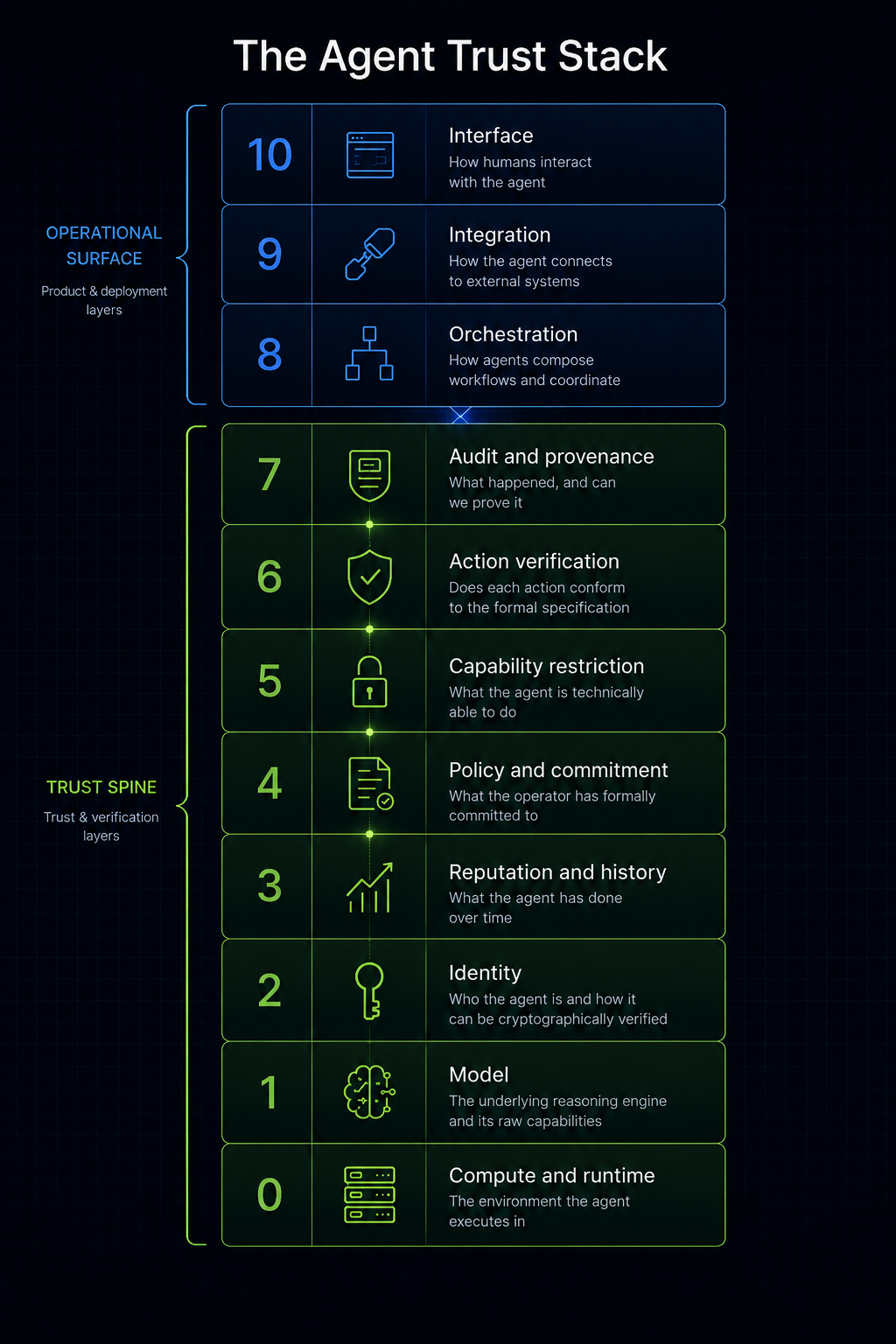

Agent trust is often discussed as though it were a single property. It is not. A relying party may need to verify who the agent is, what it is allowed to do, what it actually did, whether its actions conformed to a formal specification, and whether the execution environment itself was trustworthy.

Those are different questions, and they belong to different layers of the stack. Conflating them is the most common source of confusion in agent infrastructure conversations, and it is what allows vendors operating at one layer to claim guarantees that only make sense at another.

The first seven layers establish whether and how an agent can be trusted. The final three determine how that trust is operationalized in real workflows. Both halves matter, but they answer different questions and should be evaluated against different criteria.

Layer 0. Compute and runtime

The question this layer answers: What physical and virtual environment is the agent actually executing in, and can that environment be trusted?

This is hardware (CPU/GPU), the operating system, container runtime, and, for high-assurance deployments, trusted execution environments like AWS Nitro Enclaves, Intel TDX, or AMD SEV-SNP. The output of this layer is attestation: cryptographic evidence that a specific binary, with a specific configuration, is running in an untampered environment.

Examples include bare-metal servers, Kubernetes pods, TEE-wrapped containers, and edge devices. This layer is invisible in most agent conversations but becomes central when regulators or counterparties demand proof that the agent's runtime has not been tampered with.

Layer 1. Model

The question this layer answers: What is the underlying reasoning engine, and what are its raw capabilities and behaviors?

The LLM itself, along with model weights, inference infrastructure, temperature and sampling parameters, and context window management. This is the non-deterministic core. Everything above this layer exists to channel, constrain, or audit the model's outputs.

Choice of model is increasingly orthogonal to the rest of the stack because most serious agent platforms are LLM-agnostic; the model is treated as a swappable component, which is the right architectural decision given how fast models churn.

Layer 2. Identity

The question this layer answers: Who is this agent, who operates it, and how can a relying party cryptographically verify the answer?

Keypair management, agent registration in a directory, signed request headers, identity challenges, and operator binding. The output of this layer is verifiable identity attribution: a relying party can know with cryptographic certainty that a given action originated from a specific registered agent operated by a specific declared entity.

This layer says nothing about behavior; it only establishes the subject of any behavioral or trust claim. Without it, every other trust mechanism is anchored to nothing.

A concrete failure mode: an agent system without a Layer 2 binding cannot distinguish a legitimate agent making a permitted call from a spoofed request impersonating that agent, which makes every downstream guarantee meaningless because there is no verifiable subject to apply it to.

Layer 3. Reputation and history

The question this layer answers: What has this agent actually done over time, and how have prior interactions gone?

Behavioral history aggregation, trust scores, violation reports, dispute outcomes, and governance feeds. Builds on Layer 2 because reputation requires stable identity to accumulate against.

Reputation is empirical and historical rather than predictive. It answers "what is this agent's track record?" rather than "what will this agent do next?" It is roughly analogous to credit scoring or merchant ratings in payment networks.

The underlying inputs at this layer typically include volume of prior activity, outcome reliability, formal violation history, and verification events, which can be exposed as component signals or aggregated into a composite trust score depending on the consumer's needs. Both shapes are legitimate: composite scores enable fast threshold-based decisions and integrate easily into existing trust workflows, while component signals allow sophisticated relying parties to weight inputs against their specific use case.

A concrete failure mode this layer addresses: an agent with a history of policy violations across prior deployments would be invisible to a new counterparty without a reputation layer, allowing the same patterns to repeat across victims who cannot learn from each other's experience.

Reputation produces probabilistic signals rather than guarantees and is properly understood as input to a trust decision rather than a substitute for the enforcement mechanisms at Layers 5 and 6.

Layer 4. Policy and commitment

The question this layer answers: What has the operator formally committed the agent will and will not do?

Operator-published, signed, versioned declarations of agent scope, capabilities, action limits, refusal patterns, and escalation rules. These commitments can live in a public registry or be self-hosted by the operator and referenced by hash.

This is the contract: the formal statement of what the agent is supposed to be. Critically, this layer makes commitments; it does not enforce them. Enforcement happens at Layers 5 and 6.

The value of this layer is that it converts vague vendor promises into specific, signed, auditable commitments that have legal and reputational weight even before any technical enforcement is layered on top.

Signed commitments are a distinct trust artifact in their own right, separate from both the identity that signs them and the enforcement that follows them. They are the object a court, a regulator, or a counterparty can point to when asking what the operator promised.

Layer 5. Capability restriction

The question this layer answers: What can the agent physically do, given the credentials and network access it has been granted?

API tokens scoped to specific endpoints, network egress allowlists, IAM roles, OAuth scopes, payment rail spending limits, and read-only versus read-write credential separation.

This is the layer that converts "the agent should not do X" into "the agent literally cannot do X." It is the most powerful safety mechanism in practice today because it is well-understood, deterministic, and does not depend on any model behavior.

A concrete failure mode this layer prevents: an agent given full read-write credentials to a payment system can transfer funds to any account if its reasoning is manipulated. The same agent given a credential scoped only to read account balances and write to an allowlist of pre-approved recipients cannot, regardless of what its reasoning produces.

Most production agents today rely primarily on this layer for safety, with higher layers still maturing in most deployments.

Layer 6. Action verification

The question this layer answers: For each specific action the agent proposes, does it conform to the formal specification?

Type checking, formal verification, and contract enforcement against the typed action space. Where Layer 5 says "the agent has the keys to this room," Layer 6 says "and for every action attempted in this room, we check it against the spec before allowing it."

The output is a binary pass or fail per action, with a mathematical guarantee that passing actions conform to the declared formal specification. The guarantee is rigorous within its scope and does not extend to whether the specification itself captures business intent, legal obligation, or common sense; those remain human responsibilities.

A concrete failure mode this layer addresses: a procurement agent with appropriate Layer 5 credentials could still issue a purchase order to an approved vendor for an approved SKU at a price that violates the operator's negotiated contract terms. Layer 6 type checks the action against the contract specification and rejects it before execution.

This is the layer where category theory and type theory do their actual work, and where formal-methods approaches differentiate from policy-and-prayer alternatives.

Layer 7. Audit and provenance

The question this layer answers: What did the agent actually do, and can we prove the record is complete and untampered?

Append-only logs, cryptographically signed action traces, transparency logs in the style of Certificate Transparency, and structured explainability traces. Bound to Layer 2 identity so every logged action is attributable.

The output is undeniable evidence trails: after-the-fact accountability rather than pre-execution prevention. For regulated industries this is often the most important layer because it is what auditors, regulators, and litigators interact with.

It complements Layer 6: even with perfect pre-execution verification, you still need the audit trail to prove conformance to a third party after the fact.

---

The first seven layers establish whether the agent can be trusted. The remaining three determine how that trust is operationalized in real workflows. They are operational layers rather than trust layers, and they answer product and deployment questions rather than verification questions.

Layer 8. Orchestration

The question this layer answers: How are agents composed into workflows, and how do they coordinate with humans and with each other?

Multi-agent orchestration, workflow engines, human-in-the-loop gates, escalation routing, approval queues, and task decomposition. This is where the actual business workflow lives.

Most agent platforms in the market today are primarily orchestration layers with light touches of the layers below. The hard problem at this layer is not only verifying individual actions, but preserving constraints across the workflow as a whole; properties that hold for each step can still fail in composition.

Layer 9. Integration

The question this layer answers: How does the agent actually connect to the systems it needs to read from and act on?

ERP connectors, database adapters, API clients, file system access, messaging integrations, and webhook handlers. The unsexy plumbing that determines whether anything actually works in production.

Often 80 percent of the engineering effort in a real deployment lives here. It is dramatically underestimated in most agent infrastructure pitches because it scales linearly with deployment count and resists generalization; every customer's enterprise stack is configured differently.

Layer 10. Interface

The question this layer answers: How do humans interact with the agent, to invoke it, supervise it, approve its actions, and consume its outputs?

Chat interfaces, dashboards, approval queues, notification channels, reporting outputs, and voice interfaces. This is the surface the end user actually touches and forms opinions about.

Often determines adoption more than any technical merit of the layers below.

How the layers compose in practice

A relying party considering whether to trust an agent works down the trust spine: who is this agent (Layer 2), what is their track record (Layer 3), what has their operator committed to (Layer 4), what can they technically do (Layer 5), is each action conformant (Layer 6), do we have evidence of what happened (Layer 7)?

An attacker, or a misbehavior failure mode, has to defeat all the relevant layers to cause harm. An identity spoof fails at Layer 2. An out-of-scope action attempt fails at Layer 5 or 6. A policy violation gets caught at Layer 7 even if it slips through earlier.

The layering is defense in depth.

Where vendors actually live

Most of the noise in the agent infrastructure market right now comes from vendors operating at one or two layers and claiming to solve the whole stack. The cleanest mental hygiene is to ask any vendor which layer do you operate at, and which layers do you assume someone else handles? Be deeply suspicious of any answer that says "all of them."

Identity and reputation vendors operate at Layers 2 and 3, with potential extension into Layer 4 via policy attestations.

Formal verification vendors operate at Layer 6 primarily, with significant Layer 4 presence because typed specs are the formal expression of the operator's commitments, and Layer 7 contributions through structured action traces.

Frontier model labs operate at Layer 1, with increasing presence at Layers 8 and 10 via their own product surfaces.

Orchestration platforms operate at Layer 8 primarily.

Cloud TEE providers operate at Layer 0.

The architectural insight worth carrying into commercial conversations is that a complete agent trust story requires every layer to be addressed, by someone, and the customer needs to know who owns which one. Vendors who answer that clearly win sophisticated buyers. Vendors who blur it lose them.